AI Governance and LLM Security for the Enterprise

Zscaler-Based AI Security with Inline SSL Inspection, DLP, and Access Controls

Generative AI is moving into the enterprise faster than most security and governance frameworks can keep up. Employees are already using AI tools across the business — often over encrypted traffic and outside traditional security controls.

AI can drive productivity, speed decision-making, and improve customer experiences. It can also create a new class of security and compliance risk.

Drawing on real-world experience in highly regulated environments—including financial services, capital markets, and global payroll systems—Hararei can help organizations safely adopt AI by combining Zscaler's cloud-delivered security with practical, policy-driven governance.

A New Class of Security Risks

In practice, most organizations already have AI usage happening today—they just don’t have visibility or control over it.

Without the right controls, organizations may be unable to reliably:

- Identify which AI platforms employees are using

- Prevent sensitive data from being submitted to AI tools

- Enforce acceptable-use policies for AI applications

- Maintain compliance with data protection obligations from regulators

Blocking AI entirely is not the answer. The goal is to enable AI securely—with visibility, governance, and real-time control.

How Zscaler helps protect AI adoption

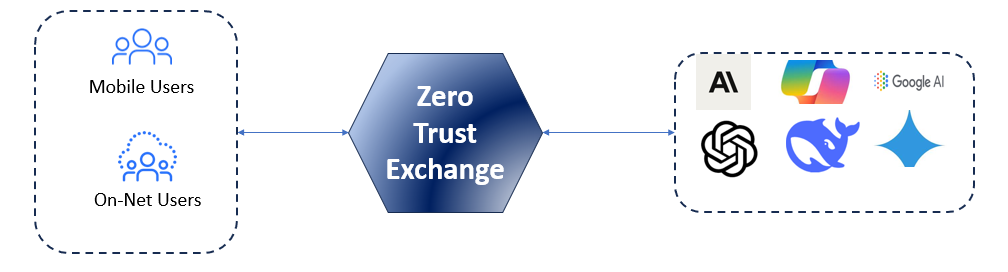

Zscaler Inspects All Traffic Going To The Internet, Including AI Applications

Visibility into AI usage

AI applications can be identified and categorized across the organization, including generative AI platforms, coding assistants, browser extensions, and AI-enabled SaaS services. This enables security teams to detect shadow AI, understand usage trends, and make informed policy decisions.

Data Loss Prevention for AI prompts

Inline inspection of web and SaaS traffic helps prevent sensitive data from being submitted to AI engines to prevent sensitive data from being submitted. DLP policies can be used to block or alert on customer information, financial data, intellectual property, and regulated information before it leaves the organization.

AI Access and Usage Controls

Organizations can control not only which AI services employees may access, but also how those services are used. Policies can allow approved AI tools, block unsanctioned or high-risk services, and restrict access by role, department, or device posture. Session controls can also limit actions such as uploads, copy/paste, and other risky interactions.

Inline SSL Inspection

Most AI applications operate over encrypted HTTPS. Zscaler decrypts and inspects traffic inline, enabling organizations to inspect prompts, enforce policy, and detect sensitive data exposure in ways that traditional perimeter tools cannot.

CASB and Browser Isolation Controls

Through inline CASB and browser isolation capabilities, Zscaler can enforce granular controls over user interactions within AI and cloud applications. These controls can block copy/paste into prompts, restrict file uploads, prevent downloads of AI-generated files, isolate unsanctioned applications, and enforce restricted sessions for unmanaged devices.

AI Guard for AI-specific Protection

Zscaler Gen AI protection extends beyond app access by inspecting both prompts and responses in real time. It adds AI-specific protections such as prompt inspection, DLP for AI interactions, detection of prompt injection and jailbreak attempts, and content moderation for unsafe or non-compliant output.

These risks are not theoretical. In real environments, organizations are already seeing sensitive data shared with AI platforms, often without malicious intent—simply due to lack of visibility and control.

A practical approach to secure AI enablement

With Zscaler, organizations can move from unmanaged AI adoption to policy-driven AI enablement by:

- Discovering which AI applications are in use

- Allowing only approved AI tools and use cases

- Preventing sensitive data leakage into AI prompts

- Governing user actions inside AI applications

- Detecting AI-specific threats in real time

- Supporting compliance and audit requirements with better visibility and logging

Supporting Data Sovereignty and Global Data Protection Requirements

Data protection regulations require organizations to control how sensitive data is used and shared. Generative AI introduces a new risk — employees can unknowingly submit regulated or confidential data into external AI platforms, often without visibility.

Zscaler helps address this by inspecting prompts, enforcing data protection policies, and restricting AI usage to approved workflows — ensuring AI adoption aligns with security and compliance requirements.

Why Hararei

Hararei brings practical, real-world experience securing sensitive data in complex, regulated environments—including financial services, capital markets, and global enterprise platforms.

We understand that securing AI is not just a technology problem—it is a policy, governance, and operational challenge. Our approach focuses on aligning Zscaler capabilities with how organizations actually use data, applications, and AI in production environments.

From initial visibility into AI usage, to defining enforceable policies, to implementing controls without disrupting the business, Hararei helps organizations move from theoretical AI risk to practical, secure AI enablement.

Advanced AI-Driven Security Architecture Deep Dive with Zscaler FAQ

Achieving next-generation, AI-enhanced protection by leveraging Zscaler's platform with the expertise of Hararei.

1. What AI-related risks does Zscaler help protect against?

Zscaler helps organizations address key risks associated with AI usage, including the unintended exposure of sensitive data through prompts, the use of unsanctioned AI applications, and interactions with potentially harmful or manipulated AI content. By inspecting traffic inline through the Zero Trust Exchange, Zscaler ensures that policies are enforced before data is transmitted to external AI platforms, reducing the likelihood of data leakage or misuse.

2. How does Zscaler prevent sensitive data from being shared with AI tools like ChatGPT?

Zscaler uses integrated Data Loss Prevention capabilities within Zscaler Internet Access to inspect user interactions with AI platforms in real time. When a user submits a prompt or uploads data, Zscaler analyzes the content for sensitive information such as personal data, financial records, or intellectual property. Based on defined policies, it can block the request, allow it with modifications, or log the activity for further review, ensuring that sensitive data is not inadvertently exposed.

3. Can Zscaler control which AI applications users are allowed to access?

Zscaler provides full visibility and control over AI application usage through its cloud access security broker functionality. It can identify AI applications being accessed across the organization and distinguish between sanctioned and unsanctioned tools. Policies can then be applied to allow access, restrict usage, or completely block certain applications, helping organizations prevent uncontrolled or risky adoption of AI services.

4. How does Zscaler protect against prompt injection or malicious AI responses?

Zscaler reduces exposure to prompt injection and malicious outputs by inspecting outbound requests and applying security policies that identify suspicious or high-risk interactions. In addition, it can leverage browser isolation to ensure that responses from AI platforms are executed in a controlled environment. This approach limits the potential impact of malicious content without requiring changes to the underlying AI models themselves.

5. Does Zscaler provide visibility into how employees are using AI tools?

Zscaler provides detailed visibility into user activity across AI platforms, allowing organizations to understand how these tools are being used in practice. This includes tracking which applications are accessed, how frequently they are used, and, depending on policy configuration, the nature of the interactions. This level of insight enables security and compliance teams to assess risk and refine governance strategies around AI adoption.

6. How does Zscaler enforce AI security policies for remote users?

Because Zscaler operates as a cloud-native platform, it applies consistent security policies regardless of where users are located. All traffic is routed through the Zero Trust Exchange, whether users are on a corporate network, at home, or traveling. This ensures that interactions with AI services are always subject to the same inspection and control mechanisms, eliminating gaps that might otherwise arise in remote or hybrid work environments.

7. Can Zscaler isolate AI sessions to prevent data leakage?

Zscaler can isolate AI sessions using its browser isolation capabilities, which execute web sessions in a secure, remote environment rather than on the user’s device. This allows organizations to tightly control how users interact with AI tools by restricting actions such as copying, pasting, downloading, or uploading data. As a result, sensitive information is prevented from being exposed either to the AI service or to the endpoint.

8. How does Zscaler support compliance requirements for AI usage?

Zscaler supports compliance efforts by enforcing data protection policies on all interactions with AI services and maintaining detailed audit logs of user activity. Organizations can define how data is handled, ensure that sensitive information is not transmitted to external platforms, and demonstrate adherence to regulatory requirements through reporting and monitoring capabilities. This enables safe and governed adoption of AI within regulated environments.

9. How does Zscaler simplify reporting and compliance for AI usage?

Zscaler simplifies reporting and compliance by providing centralized, easy-to-consume visibility into all user interactions with AI applications. Through integrated logging and analytics across the Zero Trust Exchange, organizations can quickly generate reports that show who is using AI tools, what data is being shared, and whether policies are being enforced. This allows security, risk, and compliance teams to demonstrate adherence to internal policies and external regulations without relying on multiple tools or manual data collection, significantly reducing the operational burden of governing AI usage.

Secure AI Adoption — Without Slowing The Business

Speak with Hararei to understand how Zscaler can help your organization gain visibility into AI usage, prevent data exposure, and implement practical, enforceable governance.

Contact Us Please contact Hararei for an in-depth discussion on using any of our Cloud or Cybersecurity products or services